Nonlinear Regression

January 9, 2018

1. Idea

[watch video]

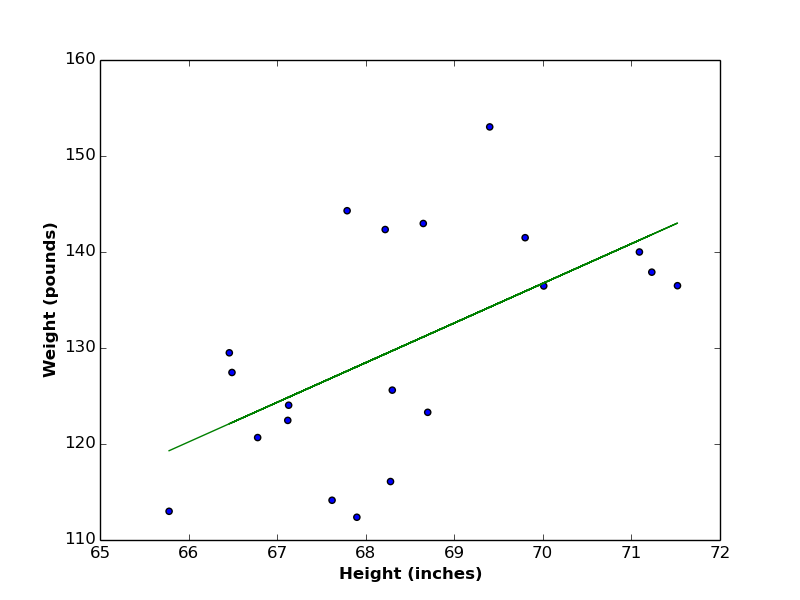

In a previous post, we saw the linear relationship between heights and weights of a group of persons (see the below figure), where both variables are growing together and shrinking together as well.

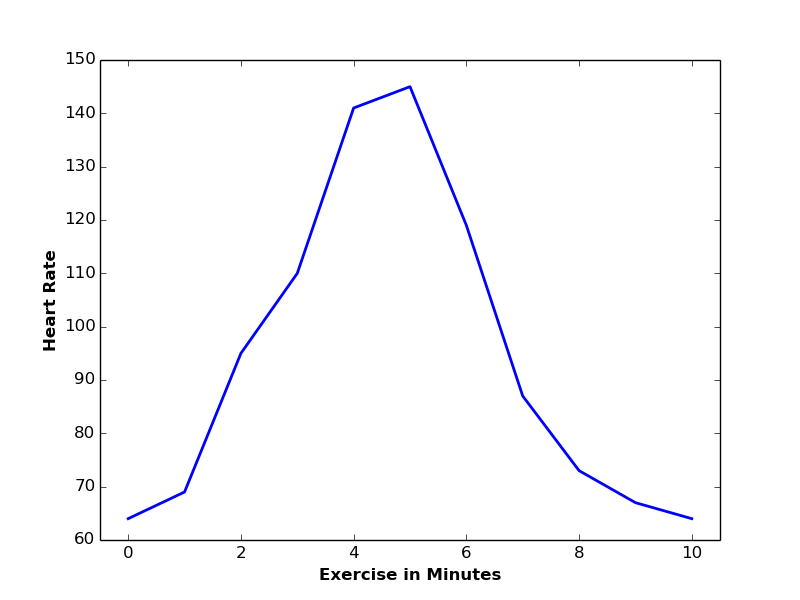

This is however not the case with every two variables. For instance, the average heart beats of a person has a nonlinear relationship with the number of minutes that person is spending in physical exercising. Number of heart beats is rapidly increasing during the first 5 minutes. Afterwards, it starts to decrease.

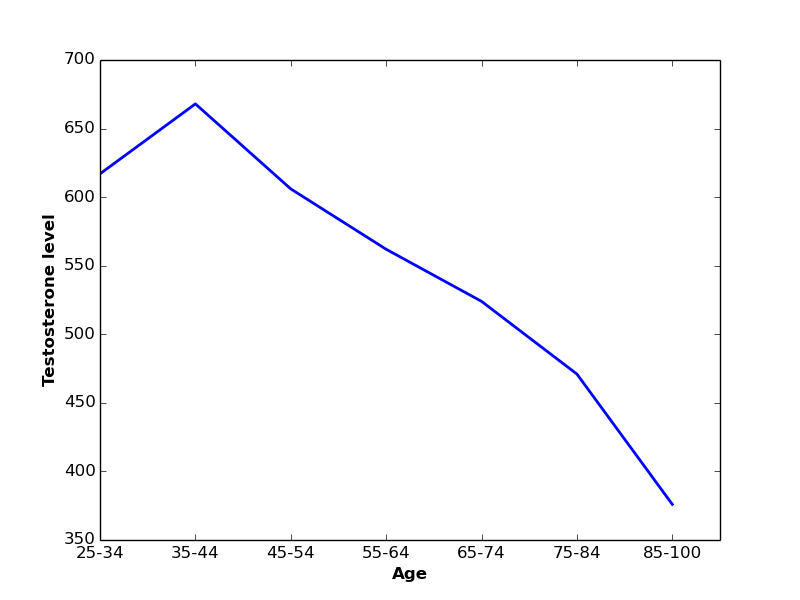

As another example, levels of testosterone hormone increase in men in the age between 25 and 44 years old (on average). Afterwards, testosterone level starts to decrease monotonically as men become older.

In such scenarios, a linear function can not express the relation between these variables, and a non-linear (polynomial) function should be used instead.

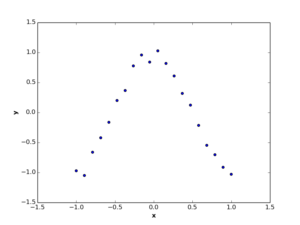

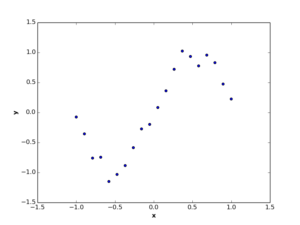

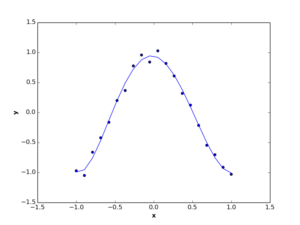

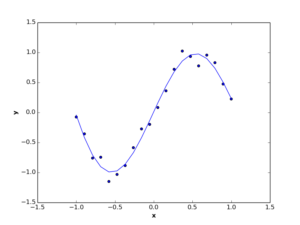

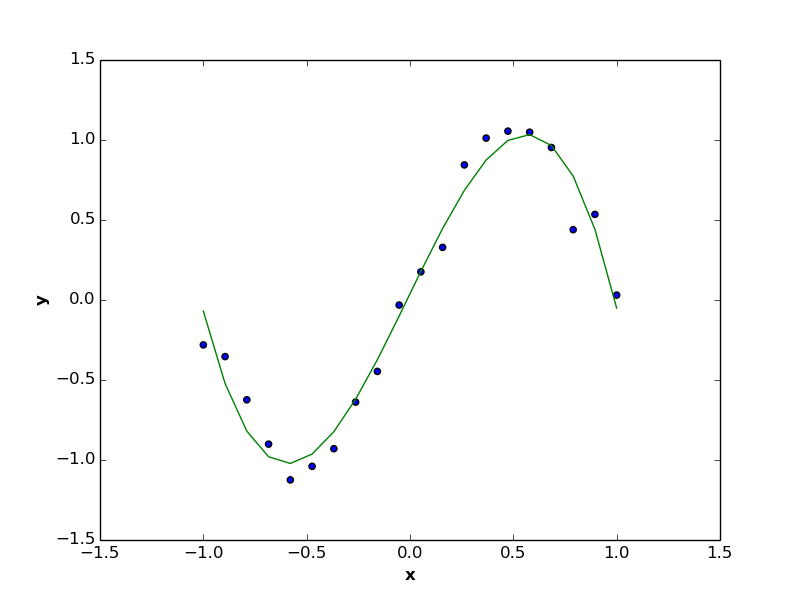

Suppose we have a set of observations represented by two dimensions \(x\) and \(y\), where these observations have non-linear relation, like the below figures.

Now suppose you need to find a function to represent the relation between these 2 variables (also called features). In this case, the function should be nonlinear (polynomial of degree \(>1\)) function.

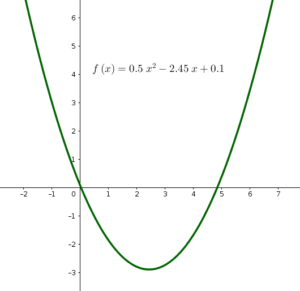

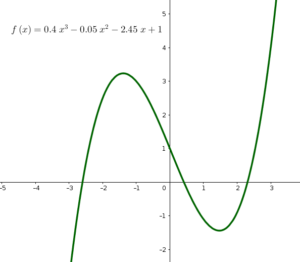

As a reminder, a polynomial function of degree \(>1\) is a function that has more than one direction. In the below figure, the left-side function has two directions, while the right-hand function has three directions.

2. Theory

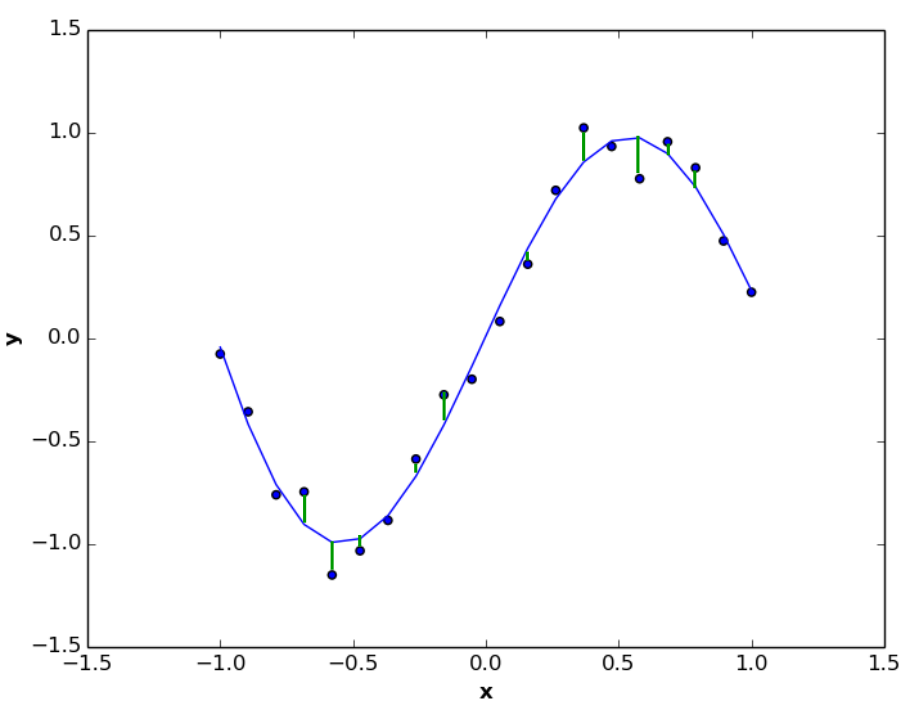

As we explained in linear regression article,we want to find a nonlinear regression function (like the blue function below) that minimize distances (vertical green lines) between true and predicted values of dependent variable \(y\) (i.e Least Squares approach).

The error function we need to minimize is defined as:

\begin{equation}

E(\mathbf{w}) = \frac{1}{N} \sum\limits_{i=1}^N [y^{(i)}-f(x^{(i)},\mathbf{w})]^2\tag{1}

\end{equation}

which is the same as that of linear regression. The only difference is with hypothesis function \(f(x^{(i)},\mathbf{w})\). This function will be nonlinear with respect to predictor \(x\):

\begin{equation}

f(x^{(i)},\mathbf{w}) = w_0 {x^{(i)}}^0 + w_1 {x^{(i)}}^1 + w_2 {x^{(i)}}^2 + w_3 {x^{(i)}}^3 + … + w_P {x^{(i)}}^P\tag{2}

\end{equation}

Or in a compact form:

\begin{equation}

f(x^{(i)},\mathbf{w}) = \sum\limits_{p=0}^P w_p {x^{(i)}}^p\tag{3}

\end{equation}

where \(P\) is the polynomial degree of function \(f(x^{(i)},\mathbf{w})\).

In order to minimize function \(E(\mathbf{w})\) (Equation 1), we need to put its derivatives with respect to \(\mathbf{w}\) to \(0\):

\begin{equation}

\nabla E(\mathbf{w})= 0\tag{4}

\end{equation}

Let’s compute the derivative of \(E(\mathbf{w})\) with respect to each coefficient \(w \in \mathbf{w}\):

- For \(w_0\):

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_0}= \frac{1}{N} \sum\limits_{i=1}^N \frac{\partial [y^{(i)} – (w_0 + w_1 {x^{(i)}} + w_2 {x^{(i)}}^2 + w_3 {x^{(i)}}^3 + … + w_P {x^{(i)}}^P)]^2}{\partial w_0}\tag{5}

\end{equation}

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_0}= \frac{1}{N} \sum\limits_{i=1}^N \frac{\partial [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)]^2}{\partial w_0}\tag{6}

\end{equation}

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_0}= \frac{1}{N} \sum\limits_{i=1}^N \frac{\partial [{y^{(i)}}^2 -2 w_0 y^{(i)} – 2 y^{(i)} \sum\limits_{p=1}^P w_p {x^{(i)}}^p + w_0^2 + 2w_0 \sum\limits_{p=1}^P w_p {x^{(i)}}^p + \sum\limits_{p=1}^P w_p {x^{(i)}}^{2p}]}{\partial w_0}\tag{7}

\end{equation}

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_0}= \frac{1}{N} \sum\limits_{i=1}^N -2 y^{(i)} + 2w_0 + 2 \sum\limits_{p=1}^P w_p {x^{(i)}}^p\tag{8}

\end{equation}

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_0}= \frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)]\tag{9}

\end{equation}

- For \(w_1\):

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_1}= \frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)]x^{(i)}\tag{10}

\end{equation}

- For \(w_2\):

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_2}= \frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)]{x^{(i)}}^2\tag{11}

\end{equation}

- For \(w_p\) of any polynomial degree \(p \in [0:P]\):

\begin{equation}

\frac{\partial E(\mathbf{w})}{\partial w_p}= \frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)] {x^{(i)}}^p,

\quad \quad

\forall p \in [0:P]\tag{12}

\end{equation}

3. Closed Form Approach

[watch video]

In this method, we convert equation (12) into \(P+1\) non-linear system, and solve the generated equations system using metrics’ operations. Let’s equalize equation (12) to 0 and re-order it:

\begin{equation}

\frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)] {x^{(i)}}^p = 0\tag{13}

\end{equation}

\begin{equation}

\sum\limits_{i=1}^N {x^{(i)}}^p y^{(i)} = \sum\limits_{i=1}^N {x^{(i)}}^p (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)\tag{14}

\end{equation}

\begin{equation}

\sum\limits_{i=1}^N {x^{(i)}}^p y^{(i)} = w_0 N + \sum\limits_{p=1}^P w_p {x^{(i)}}^{2p}\tag{15}

\end{equation}

This non-linear equation system is written in matrix form as:

\begin{gather}

\begin{bmatrix}

\sum\limits_{i=1}^N y^{(i)} \\\\

\sum\limits_{i=1}^N x^{(i)} y^{(i)} \\\\

\sum\limits_{i=1}^N {x^{(i)}}^2 y^{(i)} \\\\

\vdots \\\\

\sum\limits_{i=1}^N {x^{(i)}}^p y^{(i)}

\end{bmatrix}

=

\begin{bmatrix}

w_0 \\\\

w_1 \\\\

w_2 \\\\

\vdots \\\\

w_p

\end{bmatrix}

\begin{bmatrix}

N & \sum\limits_{i=1}^N x^{(i)} & \sum\limits_{i=1}^N {x^{(i)}}^2 & … & \sum\limits_{i=1}^N {x^{(i)}}^p \\\\

\sum\limits_{i=1}^N x^{(i)} & \sum\limits_{i=1}^N {x^{(i)}}^2 & \sum\limits_{i=1}^N {x^{(i)}}^3 & … & \sum\limits_{i=1}^N {x^{(i)}}^{p+1} \\\\

\sum\limits_{i=1}^N {x^{(i)}}^2 & \sum\limits_{i=1}^N {x^{(i)}}^3 & \sum\limits_{i=1}^N {x^{(i)}}^4 & … & \sum\limits_{i=1}^N {x^{(i)}}^{p+2} \\\\

\vdots & \vdots & \vdots & \vdots\\\\

\sum\limits_{i=1}^N {x^{(i)}}^p & \sum\limits_{i=1}^N {x^{(i)}}^{p+1} & \sum\limits_{i=1}^N {x^{(i)}}^{p+2} & … & \sum\limits_{i=1}^N {x^{(i)}}^{2p}

\end{bmatrix} \tag{16}

\end{gather}

which simulates the form:

\begin{equation}

\mathbf{B} = \mathbf{wA}\tag{17}

\end{equation}

then the vector \(\mathbf{w}\) is computed as:

\begin{equation}

\mathbf{w} = \mathbf{A}^{-1} \mathbf{B}\tag{18}

\end{equation}

4. Gradient Descent Algorithm

We follow the same gradient descent algorithm of linear regression (listed below). The only difference will be with the new error function defined in equation (3) and its derivatives (equation (12)).

Algorithm1 GradientDescent(\(E(\mathbf{w}),\mathbf{w},\eta\))

- Input:

- \(E(\mathbf{w})\): cost function

- \(\mathbf{w}\): initial values of coefficients vector

- \(\eta\): learning rate

- Output:

- \(\mathbf{w}\): updated values of coefficients vector

- Procedure:

- repeat

- \(w_p=w_p – \eta \frac{\partial E(\mathbf{w})}{\partial w_p}, \forall w_p \in \mathbf{w}\)

- until \(\nabla E(\mathbf{w})= 0\)

- return \(\mathbf{w}\)

Coefficients \(w_p\)’s in step (8.A.) are updated as follows:

\begin{equation}

w_p = w_p – \eta \frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)] {x^{(i)}}^p\tag{19}

\end{equation}

5. Multivariate Nonlinear Regression

Let’s consider the following multivariate nonlinear function (equation 20) between dependent variable \(y\), and vector of independent variables \(\mathbf{x} = [x_1,x_2]\):

\begin{equation}

f(\mathbf{x}^{(i)},\mathbf{w}) = w_0 + w_1 x_1^{(i)} + w_2 x_2^{(i)} + w_3 {x_1^{(i)}}^2 + w_4 x_1^{(i)} x_2^{(i)} + w_5 {x_2^{(i)}}^2\tag{20}

\end{equation}

This nonlinear equation can be transformed to linear equation, by introducing a new vector \(\mathbf{z}\):

\begin{equation}

\mathbf{z} = [x_1^{(i)},x_2^{(i)}, {x_1^{(i)}}^2, x_1^{(i)} x_2^{(i)}, {x_2^{(i)}}^2]\tag{21}

\end{equation}

By substituting \(\mathbf{z}\) with it’s corresponded values in equation (20), we obtain the following linear equation:

\begin{equation}

f(\mathbf{z},\mathbf{w}) = w_0 + w_1 z_1 + w_2 z_2 + w_3 z_3 + w_4 z_4 + w_5 z_5\tag{22}

\end{equation}

Equation (22) converts the multivariate nonlinear regression into linear regression, where we can use the same methods of multivariate linear regression.

5.1 Closed Form Approach

We build the following \(N \times (M+1)\) matrix \(\mathbf{X}\):

\begin{gather}

\mathbf{X}

= \begin{bmatrix}

1 & z_1^{(1)} & z_2^{(1)} & z_3^{(1)} & z_4^{(1)} & z_5^{(1)} \\\\

1 & z_1^{(2)} & z_2^{(2)} & z_3^{(2)} & z_4^{(2)} & z_5^{(2)} \\\\

\vdots \\\\

1 & z_1^{(N)} & z_2^{(N)} & z_3^{(N)} & z_4^{(N)} & z_5^{(N)}

\end{bmatrix}

= \begin{bmatrix}

1 & x_1^{(1)} & x_2^{(1)} & {x_1^{(1)}}^2 & x_1^{(1)} x_2^{(1)} & {x_2^{(1)}}^2 \\\\

1 & x_1^{(2)} & x_2^{(2)} & {x_1^{(2)}}^2 & x_1^{(2)} x_2^{(2)} & {x_2^{(2)}}^2 \\\\

\vdots \\\\

1 & x_1^{(N)} & x_2^{(N)} & {x_1^{(N)}}^2 & x_1^{(N)} x_2^{(N)} & {x_2^{(N)}}^2

\end{bmatrix}\tag{23}

\end{gather}

and a \(N\times 1\) target vector \(\mathbf{Y}\):

\begin{gather}

\mathbf{Y}

= \begin{bmatrix}

y^{(1)} \\\\

y^{(2)} \\\\

\vdots \\\\

y^{(N)}

\end{bmatrix}\tag{24}

\end{gather}

The vector of coefficients \(\mathbf{w}\) is calculated as:

\begin{equation}

\mathbf{w} = (\mathbf{X}^T \mathbf{X})^{-1} \mathbf{X}^T \mathbf{Y}\tag{25}

\end{equation}

5.2 Gradient Descent Algorithm

We use the same algorithm above with the following coefficients’ updates:

\begin{equation}

w_0= w_0 – \eta\frac{-2}{N} \sum\limits_{i=1}^N y^{(i)} – (w_0 + \sum\limits_{j=1}^5 w_j z_j)\tag{26}

\end{equation}

\begin{equation}

w_j= w_j – \eta\frac{-2}{N} \sum\limits_{i=1}^N z_j [y^{(i)} – (w_0 + \sum\limits_{j=1}^5 w_j z_j)]\tag{27}

\end{equation}

We also substitute each \(z_j\) in equations (26) and (27) with it’s corresponded value in \(\mathbf{x}\) in equation (21).

6. Polynomial Degree Selection (Bias -Variance Tradeoff)

[watch video]

A question may rise in the discussion of nonlinear regression: which polynomial degree (\(p\)) should we use to build our regression function? There is no standard answer to this question. A good way to estimate the value of \(p\) is to try out different values and select between. Your selection should be in the middle between underfitting and overfitting situations.

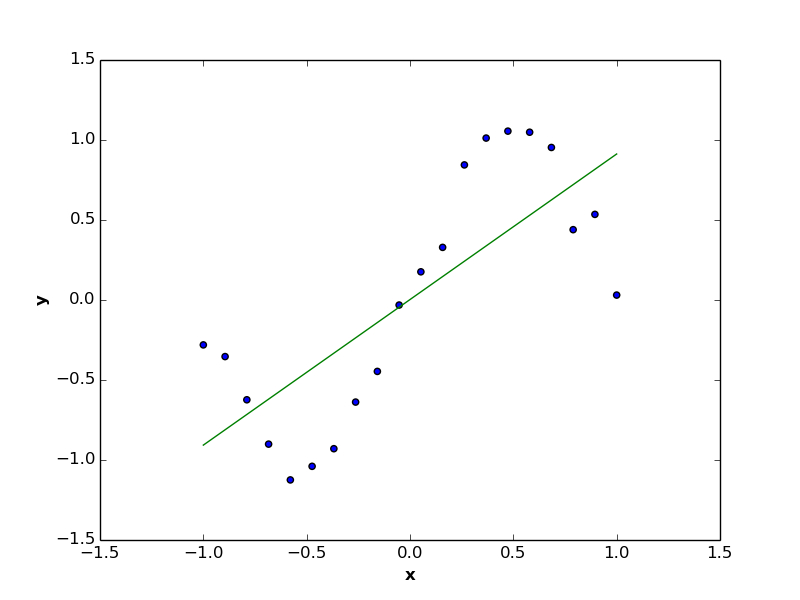

On the one hand, the case of underfitting (e.g. \(p=1\)) generate a regression function which is highly biased toward erroneous assumptions of training data (like the case of below figure, where nonlinear variable is predicted by linear function), and thus is far from simulating the relationship between target and predictor variables.

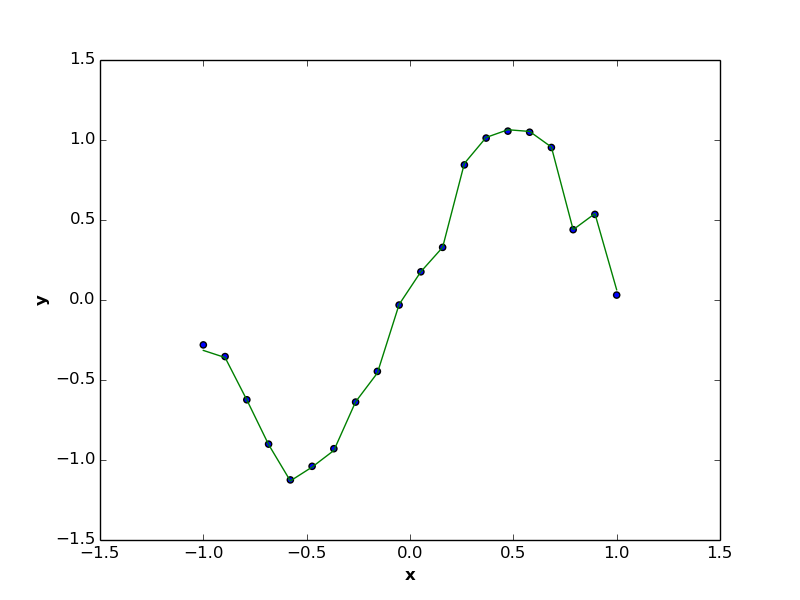

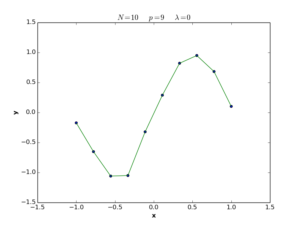

On the other hand, the case of overfitting (e.g. \(p=N-1\) in below figure) generates regression function with high variance, makes it highly sensitive to small fluctuations in the training data, and accordingly recording error rate close to \(0\). Overfitting is usually generating models that can not precisely interact with unseen data, as these models are highly biased to the training set of observations, and the corresponded regression functions are usually quite complicated.

The proper regression model (like the one in the following figure) is usually estimated by making a tradeoff between underfitting and overfitting scenarios, where the regression function is minimizing the error rate, while keeping itself as simple as possible (i.e. with lowest possible number of coefficients).

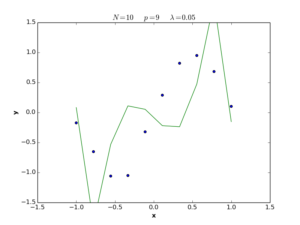

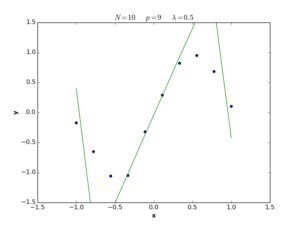

6.1 Regularization

A common technique to overcome the problem of overfitting is to add a penalty term to the error function \(E(\mathbf{w})\). The role of this penalty term – sometimes called also smooth term – is to minimize the number of coefficients used in regression function. Now, we define the new error function (\(\tilde{E}\)) to be:

\begin{equation}

\tilde{E}(\mathbf{w}) = \frac{1}{N} \sum\limits_{i=1}^N [y^{(i)}-f(x^{(i)},\mathbf{w})]^2 + \frac{1}{2} \lambda \sum\limits_{p=1}^P w_p^2\tag{28}

\end{equation}

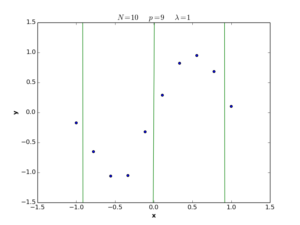

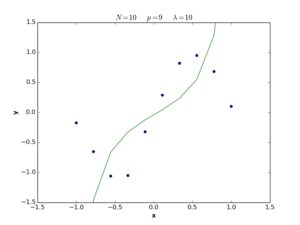

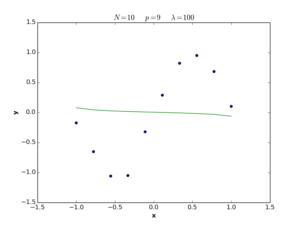

The \(\lambda\) term in equation (28) is called the regularization coefficient, and its role is to control the simplicity of the error function in terms of \(\mathbf{w}\) length. If \(\lambda\) is large, the function tends to be simple, and we move to the underfitting situation, while if \(\lambda\) is small, the function tends to be complex and we move to the overfitting situation. If \(\lambda = 0\), the regularization term is deleted and we return to the definition (1) of error function. The following plots show nonlinear regression functions generated by equation (28) with different \(\lambda\) values.

Please note also, that the intercept coefficient \(w_0\) is excluded from the penalty term in (28), to make our error function independent from the origin of target variable \(y\). The intercept coefficient \(w_0\) has nothing to do with the simplicity or complexity degree of the regression function. The only effect of changing \(w_0\) is to move the fitted curve from it’s place into one direction of the target axis. Therefore, \(w_0\) does not need to be regularized with other coefficients.

Now, we will expand equation (28) by substituting the hypothesis function with the compact form of nonlinear regression formula in equation (3):

\begin{equation}

\tilde{E}(\mathbf{w}) = \frac{1}{N} \sum\limits_{i=1}^N [y^{(i)}-(\sum\limits_{p=0}^P w_p {x^{(i)}}^p)]^2 + \frac{1}{2} \lambda \sum\limits_{p=1}^P w_p^2\tag{29}

\end{equation}

and compute the derivative with respect to each \(w_p \in \mathbf{w}\):

\begin{equation}

\frac{\partial \tilde{E}(\mathbf{w})}{\partial w_p}= \frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)] {x^{(i)}}^p + \frac{\partial (\frac{1}{2} \lambda w_p^2)}{\partial w_p}\tag{30}

\end{equation}

which is reduced to:

\begin{equation}

\frac{\partial \tilde{E}(\mathbf{w})}{\partial w_p}= \frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)] {x^{(i)}}^p + \lambda w_p\tag{31}

\end{equation}

Putting equation (31) to zero will generate:

\begin{equation}

\frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – \sum\limits_{p=0}^P w_p {x^{(i)}}^p] {x^{(i)}}^p + \lambda w_p = 0\tag{32}

\end{equation}

6.1.1 Closed Form Approach

Let’s re-write function (32) into matrix form:

\begin{equation}

[\mathbf{Y} – \mathbf{wX}] \mathbf{X}^T + \lambda \mathbf{w} = 0\tag{33}

\end{equation}

where \(\mathbf{X}\), \(\mathbf{Y}\), and \(\mathbf{w}\) are defined in equation (16). Now we re-arrange equation (33):

\begin{equation}

\mathbf{X}^T \mathbf{Y}= \mathbf{X}^T \mathbf{Xw} – \lambda \mathbf{w}\tag{34}

\end{equation}

\begin{equation}

\mathbf{X}^T \mathbf{Y}= \mathbf{w} [\mathbf{X}^T \mathbf{X} – \lambda\mathbf{I}] \tag{35}

\end{equation}

Coefficients are calculated as:

\begin{equation}

\mathbf{w} = [\mathbf{X}^T \mathbf{X} – \lambda\mathbf{I}]^{-1} \mathbf{X}^T \mathbf{Y} \tag{36}

\end{equation}

For your convenience, putting \(\lambda = 0\) in (36) will give us same solution as equation (25).

Please note, that both (25) and (36) equations are valid for both uni- and multivariate regression as closed-form.

6.1.2 Gradient Descent Algorithm

In order to apply gradient descent algorithm, we add the penalty term \(\lambda w_p\) to equation (27):

\begin{equation}

w_0= w_0 – \eta\frac{-2}{N} \sum\limits_{i=1}^N y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p) \tag{37}

\end{equation}

\begin{equation}

w_p= w_p – \eta\frac{-2}{N} \sum\limits_{i=1}^N [y^{(i)} – (w_0 + \sum\limits_{p=1}^P w_p {x^{(i)}}^p)]{x^{(i)}}^p + \lambda w_p,

\quad \quad

\forall p \in [1:P]\tag{38}

\end{equation}

The intercept coefficient \(w_0\) is computed the same as in equation (26). The formulas (37) and (38) are valid for both uni- and multivariate regressions.

7. Implementation in Python

You can find python implementations of the above algorithms on my GitHub.